"Every man has a right to utter what he thinks truth, and every other man has a right to knock him down for it." - Samuel Johnson

I have a six-sided die. I'm going to roll it ten times, and record each roll. And when I'm done, I'm going to have an incredibly rare, bet-you-can't-reproduce-it result!

Look at that! Ten rolls of a six-sided die, and I got: 3, 2, 3, 5, 5, 5, 4, 1, 4, and 3! What a glorious, odds-defying sequence of events!

In fact, if you took a fair six-sided die and rolled it ten times, you'd have less than a 1-in-60,000,000 chance of getting that same sequence of outcomes! And yet, there's no surprise there, or at least there shouldn't be.

Image credit: retrieved from http://dreamstime.com/.

Because every possible outcome of ten dice rolls -- all 60,466,176 of them -- was equally likely. But you might wonder if there was something special about the outcomes that I rolled. After all:

- There are no 6's in any of my rolls, something that would only happen 16% of the time if you roll a die ten times.

- I got six prime numbers in a row (the first six rolls), something that only happens 7.8% of the time.

- There are three 5's in a row in there, something that would only happen 3.7% of the time in ten rolls of a fair die.

- And the odds of rolling a six-sided die ten times and getting three 5's in a row and six primes in a row and also no 6's are only 0.046%!

That last figure, 0.046%, means there's just a one-in-2174 chance of that happening, or about the same odds as you and I entering a room with twenty-one adult gavials and randomly choosing the same tooth.

Image credi: The Prague Zoo, http://www.zoopraha.cz/.

But the thing is, that sequence of ten die rolls was really randomly generated, and there are a whole bunch of equally unlikely things that didn't happen.

- I never rolled two consecutive dice to give me snake eyes, something that happens 22% of the time in ten consecutive fair rolls.

- I didn't get six even numbers in a row, which should happen 7.8% of the time.

- I didn't get three rolls in a row with the sequence "1, 2, 3," which will happen 3.7% of the time in ten rolls.

In fact, this type of reasoning -- where you ascribe an undue cause to an unlikely outcome from a random process -- is a special type of logical fallacy known as the Inverse Gambler's Fallacy.

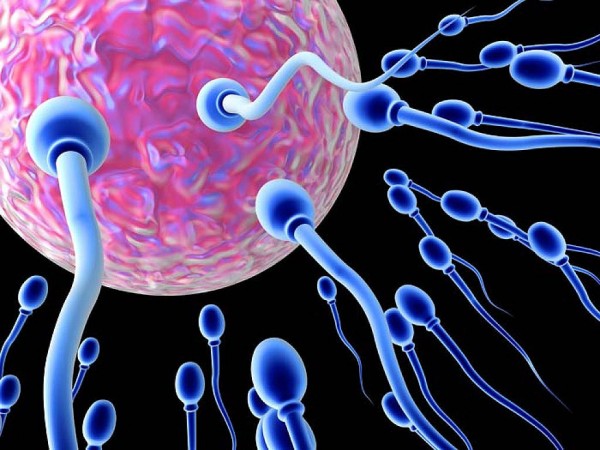

It's very unlikely that the particular sperm that fertilized the particular egg that made you would have been the one to do it, but it happened, and you're here. It's unlikely that the evolution of life on Earth would have proceeded exactly as it did, that the planet Earth would have formed consisting of each one of the atoms that makes it up, or that the finest details of cosmological evolution would have happened the exact way that they did to give us the Universe, today, exactly as we observe it.

Some of the things that happened were relatively mundane and fairly likely, others were somewhat unlikely and wouldn't occur very often if you started the Universe over with comparable initial conditions.

But it's a logical fallacy to state:

Because some thing (or combination of things) that was unlikely to occur wound up happening, our picture of how the Universe got to be this way is probably incorrect.

Because unlikely things happen all the time: that's part of what comes along with living in a chaotic Universe where many seemingly random outcomes occur. And people -- many of whom are often reasonable scientists otherwise -- use these unlikely outcomes from random events to try and sow doubt about the quality of the underlying scientific theories.

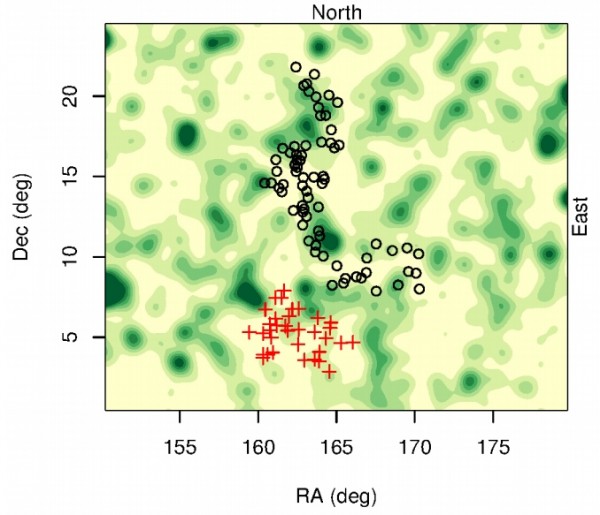

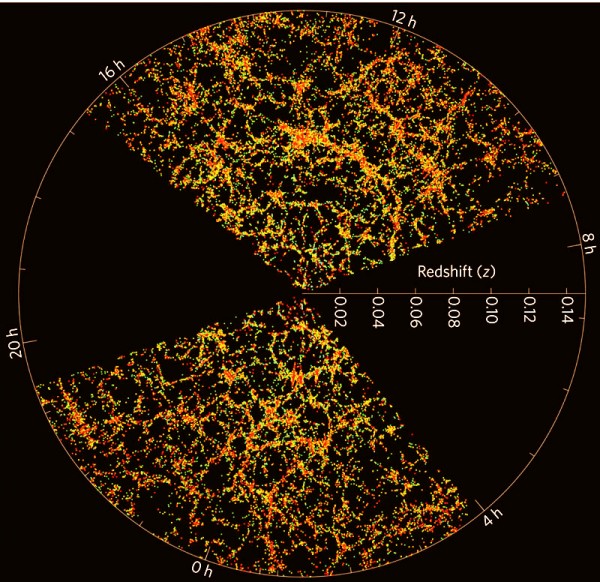

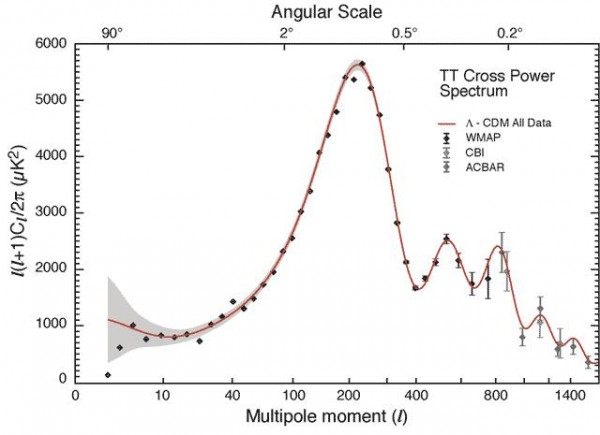

There are people who look at the quadrupole and octopole moments of the Cosmic Microwave Background -- or the first two points on the graph above -- and question the entirety of modern cosmology. Why? Because they state that the "odds" of having a Universe that conspired to give those two data points just randomly is relatively low. (But, for what it's worth, better than my dice-roll odds, atop.) When you hear the terminology "Axis of Evil" applied to cosmology, this is what they're talking about.

But there's nothing special at all about it: if we simulated our Universe millions of times, alignments like this in those two data points would occur hundreds of times. We just happen to live in a Universe where it did.

Image credit: X-ray: NASA/CXC/CfA/M.Markevitch et al.; Optical: NASA/STScI; Magellan/U.Arizona/D.Clowe et al.; Lensing Map: NASA/STScI; ESO WFI; Magellan/U.Arizona/D.Clowe et al.

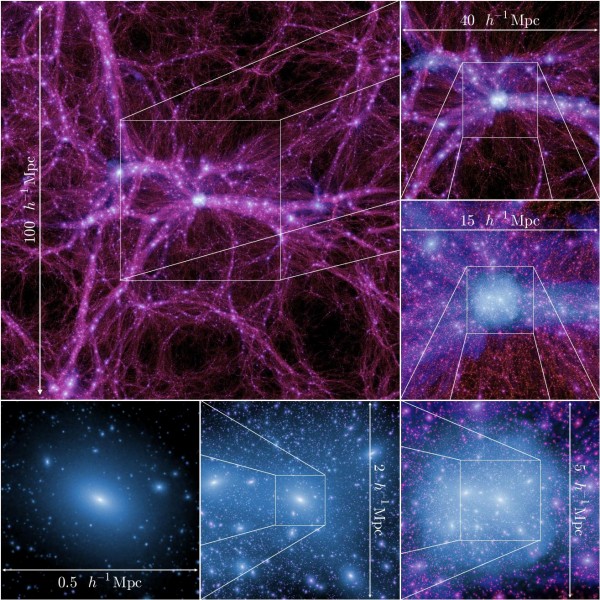

This is the Bullet Cluster, two large galaxy clusters in the process of colliding, with their hot, X-ray emitting gas shocks shown in pink. It would take these two clusters colliding at very fast speeds to produce these shocks, speeds that are relatively unlikely -- to varying degrees -- according to the best simulations of our Universe.

But unlikely is a far cry from impossible, and velocities this high are in no sense forbidden. Seeing one example of this in our Universe is by no means damning evidence against our view of dark matter and structure formation: on the contrary, having a Universe where at least one giant galaxy cluster would be moving this quickly relative to another is again more common than winning the crocodile-tooth lottery described above.

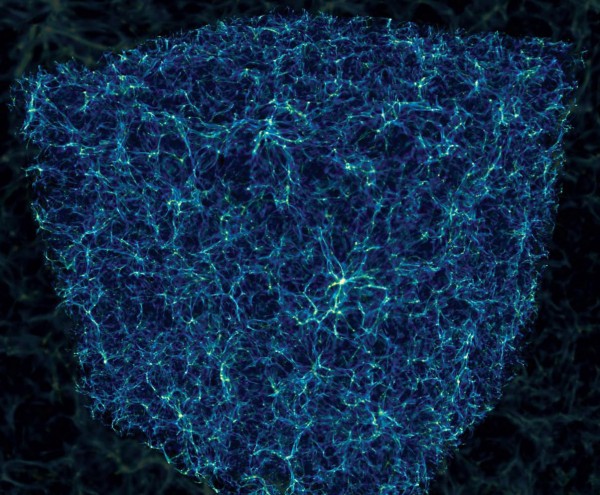

And finally, there's a new paper -- being promoted today -- that's just discovered the largest known structure in the Universe!

There are (probably) two distant, large groups of Quasars -- intense, X-ray emitting black holes found at the centers of interacting or active galaxies -- found some 13 billion light years away (at a redshift of 1.3), that are spread so far across the Universe that it would take light four billion years to go from the most distant end of one to the other.

Image credit: Roger Clowes et al., from http://arxiv.org/abs/1211.6256.

That's the exciting part, and that's the part worth reporting. However, in their paper, the authors also claim that this violates large-scale homogeneity, which is the assumption that the Universe is roughly -- on average -- the same at all places in space. While this isn't true for a galaxy or even a group of galaxies, it should be the case that, if we put an imaginary box around a large enough volume of space, all places in space should look roughly the same.

The largest structures in our Universe should be about 1.2 billion light-years on-a-side, but if this gigantic, newly discovered group of quasars is just one giant structure, then it's got a volume equivalent to being about 1.6 billion light-years on-a-side!

But first off, that's a big if: this could easily be two (or more) separate structures that just happen to be located relatively near one another. We're only seeing the quasars, here, due to the great distance, and follow-up observations are necessary. And second, even if this is just one giant structure, it could just be the case that this is the Universe we have, and there are going to be some unlikely objects in it. After all, it made all of us, didn't it?

So be aware of the inverse gambler's fallacy, and remember to keep in mind all the great successes of modern science.

Don't let the observation of an unlikely event swindle you out of our great understanding of the natural world, but keep an open mind for even better explanations, because that's how science always moves forwards. In the meantime, enjoy our latest discoveries and what's quite possibly the largest structure in the Universe: so large it might even (somewhat) defy our preconceived expectations!

Tangent: The fact that the inverse gambler's fallacy is obviously a good fallacy (I like the illustration of randomly firing at the side of a barn and drawing targets around the bullet holes) often has me a bit unsure of how to interpret entropy applied to anything but heat. That is, in a common example, you might talk about a box with a partition and gas molecules on one side but not the other, and how if you remove the partition the molecules will bounce around and spread out and it's very unlikely to find them all on one half ever again -- "there are many more disordered states ergo it's more likely", &c.; and therefore the more evenly spread gas has higher entropy.

But that sounds like the reverse gambler's fallacy again; what, except our opinion, makes a state "disordered"? (And we can't say "its higher entropy", because then the definition is circular.) It sounds an awful lot like drawing a classification of "disorderly" around all those states that to a human mind seem jumbled up, and going from there -- clearly an instance of the fallacy. I suspect that's not really what's being done (smart people have used the analog, I expect there to be something to it), but I've never clearly understood it.

So what are the odds that some old guy with a big white beard who lives somewhere up above the clouds created Adam and Eve 6,000 years ago and we are all (pygmies, eskimos and polynesians alike) descended from those two?

The definition of entropy: S=k Ln(omega) is only about energy partition. Opening up the box lets the energy of the molecules move into more spaces. If there are twice as many spaces, then there is Ln(2) more entropy.

Thanks Ethan.

As long as quantum mechanics allows all freak branches, nothing can be excluded. The fundamental question for empirical science is thus: Is there any cut-off on probability where the principle of plenitude is bounded more restrictively (by pure logic) than done by QM as we know it today? I wrote about this coincidentally just yesterday in terms of Boltzmann brains and cosmological horizons, where there are papers that do the "inverse of the inverse gambler's fallacy" in a sense, and that may also be wrong, see here and links in it:

http://www.science20.com/alpha_meme/observer_dependence_hawking_radiati…

you explained the fallacy in the argument very effectively in the first two paragraphs, but it was all downhill from there.

it isn't the inverse gambler's fallacy at all. it is attempting to apply probability calculations to non-stochastic events. there's undoubtedly a fancy name for it.

when you assert that "if we simulated our Universe millions of times, alignments like this in those two data points would occur hundreds of times" you are treading the fine line between nonsense and dishonesty. it depends on how one programs the simulation, and *nothing* else. the ghost of david hume tells me he is very, very disappointed with you.

@Petter Häggholm,

(Expanding on daedalus2u's comment.) You can think of entropy roughly as the logarithm of the number of microstates consistent with a given macrostate. In your box example, the macrostate is specified by the temperature (equivalent to the kinetic energy averaged over all molecules), pressure (time-average of the force exerted on the walls by the gas molecules), and volume of the container. The microstate is the detailed specification of the positions and momenta of all of the molecules.

"But that sounds like the reverse gambler’s fallacy again; what, except our opinion, makes a state “disordered”?"

Is there a pattern to it.

I.e. could you replace the long string of coordinates of the gas molecules with a smaller set of numbers detailing the order to their positions and energy?

Undoubtedly dumb questions: why do we speak of these large groups of quasars that are 13B LY away in the present tense? Aren't we seeing them as they were 13B years ago? And, if we're seeing a part of the universe as it existed 13B years ago, and if the universe was much smaller then, is it that surprising to see groups of quasars that are closer together than they are today? And finally, how can we say that we have a "map" of the universe when all we have is a map of the universe as it appears to us, right now? By which I mean, the farther away from us you move in the "map", the more different that region of space is today from how we see it in the map. Discuss please ;-)

People are getting worked up over a difference this small? Seriously? I think somewhere statistics and probability got taken too far.

@Cyan

"You can think of entropy roughly as the logarithm of the number of microstates consistent with a given macrostate. In your box example, the macrostate is specified by the temperature (equivalent to the kinetic energy averaged over all molecules), pressure (time-average of the force exerted on the walls by the gas molecules), and volume of the container. The microstate is the detailed specification of the positions and momenta of all of the molecules."

Exactly, and there is the fallacy.

A star being born, is going from a high state of entropy ('disorder') to a low state of order ('order') in time.

Because gravity is supposed to be a low entropy process.

But at the same time entropy can only rise, according to Ludwig Boltzmann. Don't think I do not honour the man, I really think what he did was outstanding, but he made an error in my opinion.

The creation of photons (when a star is forming) is purely coincidental and has nothing to do with gravity itself, according to the Second Law.

Or time would be in reversal, or as an alternative, the universe would have to contract instead. But a contracting universe needs also explanations, including obvious lightspeed differentiation though time.

However, if Big Bang Theory survives there are still a lot of problems to solve.

Li-6 observations in low-metallicity halo stars show an unexpected overabundance in addition to already big problems regarding Li-7.

Recently discovered gravitational arcs at a large distance suffer also the problem that they should not exist according to BB theory.

Dark matter is a problem also, the Baksan Underground Scintillator Telescope (BUST) shows no enhancement of muons coming directly from the sun, indicating that if it is a dark matter is like a normal particle it should have shown a decay (muons).

Whatever be the outcome, I agree with Ethan:

'Don’t let the observation of an unlikely event swindle you out of our great understanding of the natural world, but keep an open mind for even better explanations, because that’s how science always moves forwards.'.

"But at the same time entropy can only rise, according to Ludwig Boltzmann."

Oh dear. The fail was so hard there I think it registered on the Richter scale.

Entropy IN A CLOSED SYSTEM can only rise or stay steady.

However, there's a whole damn universe outside and it's getting high entropy illumination from the star.

@Mike

If the universe expands and you take a picture, the further you look away the distances would appear to grow, although the speed of light would continue to be the same in reality.

However, gravitational arcs should not appear at great distances at all - according to theory.

In a contracting universe on the other hand, your 2D TV should show today more information on the screen - all the 3D information from the past has to be compressed.

Showing enormous densities at great distances (CMB radiation ?, DM as overly acting gravitational interaction ??) and lightspeed will also be different from our point of view.

"Einstein's gravitational arcs" could be the remnants of compressed 3D information, and we will of course underestimate the age of the universe.

Just to clarify here,

I do think this universe shows entropy is rising.

And I do think the creation of photons is linked to entropy.

Boltzmann however disagrees in case of a star being born.

Outstanding. ThankYou. I do have a very elementary question if you would forgive. I am having a hard time putting together "largest structure' " with 'radio waves'. {? I recall quasars to be like a superest small 'sound' esentially produced by 'quaking' or 'bending' of the so super small that it has no mass. {? The commenter's request for Discussion re: What we see & What is now is profoundly observant & I eagerly awaite reading contributions of thought. I see something like a non-entity-thing of something like caves on earth and changeable like cloulds just up beyond , wow.

Distant objects have their XRay emissions stretched into the Radio spectrum.

Simple as that.

"I do think this universe shows entropy is rising."

As Cyan and daedelus say, the universe expanding increases the number of states available, therefore any repartitioning increases entropy.

And all that repartitioning energy requires is that energy is sent out in small packets. I.e. photons.

Thanks Ethan for your great post, and also thanks to the commenters drawing connections between entropy and “odd” outcomes.

I agree with misanthropope that the examples are not cases of the Inverse Gambler’s Fallacy which is quite specific (eg you’re visiting a foreign country and see that their latest lottery winning ticket is number 1234567 and you say “This lottery must have been running a long time because that’s a really unusual number”). Rather it’s a common misapplication of statistical inference.

But it is not “attempting to apply probability calculations to non-stochastic events”. In fact the simulations Ethan refers to are stochastic events and so is our universe’s evolution. Ethan’s comment “if we simulated our universe millions of times...” is both honest and sensible. The outcome of the millions of simulations (in terms of being in accord with our observed universe) depends on two things: the correctness of the model and luck. So the more our universe is in disagreement with the simulations, the more we’ll think the model is wrong.

The misapplications described in the post are to do with significance levels. The more aberrations that you’re looking for, the more the *collective* P value goes up. P can (sort of) be thought of as (more or less indicating) the probability that the aberration occurred only by luck and traditionally you want this really small to call something odd. P measures how much you should raise your eyebrows when you see the data. Critically, it depends on what you decide to test for *before* you have any data. If someone had said to you before you threw the die that they think it is rigged and doesn’t ever come up six *then* you should raise your eyebrows a little bit with the dice data above.

When new scientific data comes out we’re typically looking for anything odd. P gets high. Our eyebrows should stay low when we look at the multipole data.

Ethan: "But it’s a logical fallacy to state:

Because some thing (or combination of things) that was unlikely to occur wound up happening, our picture of how the Universe got to be this way is probably incorrect."

*** It is a logical fallacy to state:

The Copernican Principle is true, even if we observe a preferred direction in space which observationally falsifies it.

Ethan: "Because unlikely things happen all the time: that’s part of what comes along with living in a chaotic Universe where many seemingly random outcomes occur."

*** Except this outcome is not random. The alignments correlate in statistically (highly) significant ways with the location of the only known observers in the Universe. Copi, Hutterer (2010) et al have reported a 1 in 80,000,000 chance for the combined alignments of quad and octupole with each other, and with the ecliptic, by chance.

The unlikelihood is significantly increased given the observations of a preferred galaxy spin direction axis, out a billion light years, similarly aligned with the quadrupole, octupole, and ecliptic as reported by Longo (2011).

We have a five to six sigma anomaly here,so waving one's hands at the blackboard with a dismissive chuckle won't cut it.

Ethan: "And people — many of whom are often reasonable scientists otherwise — use these unlikely outcomes from random events to try and sow doubt about the quality of the underlying scientific theories."

*** So even "often serious scientists" "sow doubt"? Good heavens man it's science, not your catechism. Is your faith threatened by these scientific observations?

Why, it appears that yes, it is.

After all, even serious scientists have noticed that handwaving and chuckling are often just the shot across the bow. More serious character assassination ("often" serious scientists???) can be predicted with a high confidence level to follow any continued doubt-sowing....

Ethan: "There are people who look at the quadrupole and octopole moments of the Cosmic Microwave Background — or the first two points on the graph above — and question the entirety of modern cosmology. Why?"

*** Because those first two points on the graph above directly contradict the Copernican Principle itself.

Ethan: "Because they state that the “odds” of having a Universe that conspired to give those two data points just randomly is relatively low."

*** "Relatively low". It is 1 chance in 80,000,000 just for the quad and octupole alignments. Toss in the galaxy spin rotation axis, the preferred periodic galaxy distributions with Earth at or near the center of the inhomogeneity, and the demonstration by Clifton, et al, in 2008 that a "void cosmology" with Earth near the center can account for Type 1a supernovae without having to invent dark energy........

Well. As I said above, handwaving and chuckles won't get it done this time.

Ethan: "(But, for what it’s worth, better than my dice-roll odds, atop.) When you hear the terminology “Axis of Evil” applied to cosmology, this is what they’re talking about."

*** Thanks for spelling it right.

Ethan: "But there’s nothing special at all about it: if we simulated our Universe millions of times, alignments like this in those two data points would occur hundreds of times. We just happen to live in a Universe where it did."

*** False. You ignore the combined improbabilities of quadrupole and octupole mutual alignment, with the alignment of both with the ecliptic. You ignore the further improbability of a preferred axis for galaxy handedness along the same Axis.

You ignore it because you don't want anyone sowing doubt.

You sound like a religious apologist, because that is exactly what you in fact are.

But you are not a very good one.

I suggest you immediately read and understand "Science As Falsification" by Karl Popper.

I always thought (and still do) that Homogeneity principle is more of a guess than a serious scientific law.

If we look at current BB model with inflation and everything then yes, right after inflation ended and energy became matter, the Universe probably was same everywhere. If it's still the same now (and of course, now the question arises what's big enough sample size), I'm not sure. Mind that I'm not saying it isn't. Just saying since we don't really understand 95% of Universe content (DM/DE) it's hard to give an honest statement of what Universe "should" be on largest scales. Too much unknowns for me at this time to believe that homogeneity must be true.

Is there any table available, which contains the measured values of _all_ multipoles from 1 to 1500? Thanks in advance.

There seems to exist a kind of pattern for all "divulsors" in the plot related to prime numbers and small multiples of them as

3, 5, 7, 3·7=21, 2·3·7=42, 3·5·7=105, 3·5·7²=735, which are the most obvious ones. I would like to check this...

SL Homogeneity is pretty much required otherwise we couldn't discover the science of objects we can't physically reach.

James, you ignore that for millions of years, brains have developed into pattern seeking wetware machines.

Because you think what you see is real and hate the idea that you aren't smart enough to look behind the curtain of your limited perception.

mmm... discover the science of objects? Not sure I understand what you mean by this.

from wiki: ..."the cosmological principle is the working assumption that observers on Earth do not occupy an unusual or privileged location within the universe as a whole, judged as observers of the physical phenomena produced by uniform and universal laws of physics."

and with this I agree completely.

also.."usually stated formally as 'Viewed on a sufficiently large scale, the properties of the Universe are the same for all observers.'" - again.. properties of Universe are same for all... Agree completely!

Now comes the "but".. ... "This amounts to the strongly philosophical statement that the part of the Universe which we can see is a fair sample".... This needn't be so. At least I don't see any proof of why our visible universe is "large enough" sample that we can be sure.

Here is what I have in mind as an example. Since we don't understand DE, what triggered it or how it works. We know (sort of) that current accelerated expansion started some 6-8 billion years after BB. But this is based on our observable part. Since we don't know the properties or causes of this, you could postulate that this isn't a uniform effect in the whole universe. It might have different values in different regions. There might be "observes" who don't see anything but couple of galaxies when they look at the night sky because DE ratio over DM and baryonic matter is different. If there are such observers than homogeneity principle doesn't hold. The laws of physics are same for all, but not all see the same.

It might be that I don't understand enough math. But from a theory side, I don't see why homogeneity is such big thing to give up if needed. There is the other side, that whatever Universe is beyond our observable limit, is really of no concern to us since it's physically irrelevant.

The "inverse gambler's fallacy" can be understood as a failure to grasp conditional probability.

Once Billy Bob has won the lottery, the conditional probability that his number was drawn is exactly "1".

It doesn't matter what the "a priori" odds were.

Any universe observed by humans has to be compatible with the existence of human observers.

That's no more implies the universe having been magically created for humans, than the fact that every lottery has a winner implies that every lottery is won by a miracle.

Who would have ever come up with the name Wow? Anyway, Wow thanks for the free psychoanalysis, but surely you can do better than that; or on the other hand maybe you can't.

"mmm… discover the science of objects? Not sure I understand what you mean by this. "

Every action and reaction requires an object to display that.

It's a general way of saying "physical science".

If we don't suppose the same physics, then unless and until we go to the nearest star physically, we can't say anything about it. Because the science "could" be different, and interpreting it based on what OUR sun is doing could be incorrect.

Indeed we assume the science we see in the lab wrt photons and radiation apply to the sun. But if we can't assume homogeneity, we can't tell if the sun is actually there doing what we see happening on the earth.

So to make any assertion about something beyond our immediate reach, we have to assert homogoneity in the science.

Same thing for distant past events.

@ Wow

I'm not sure you read my last post. I have said several times that I do believe that the laws of physics are same everywhere. That doesn't mean we know them all. But what laws that we do know prohibit not having homogeneity in the whole Universe (not our visible part).

What I object to is this "This amounts to the strongly philosophical statement that the part of the Universe which we can see is a fair sample"

Now maybe we disagree on the meaning of "homogeneity". If by that you only mean that the laws are same everywhere, then ok.

I argue the laws could be the same and yet yield different "visible universes". No? What law prevents the expansion rate of one part of the universe to be different then some other part? Taking in mind huge distances beyond our visible universe. What physical law would be different? None that i know of. And if there is, please point me to it so we can settle this :)

Every constant is the same... everything works same as here. Just there there is a bit more DE then here. Or it's stronger than here for whatever reason. We don't know anything about DE. It;s not a constant. it's not tied to any law or process than we know. It violates nothing. Yet it would yield a different universe for it's observer there. And if so how could we ever know? It's beyond our causal sphere.

This has no effect on what you talk about... the nearby Sun.. or whatever is inside our visible universe. And I have no problem with homogeneity inside our visible Universe. But the original theory talk about the whole Universe, not just the visible part.

I am hoping for an answer, since it's an accepted assumption. But what law/laws would be violated?

p.s.

sorry.. made one BIG typo...

In the sentence "What law prevents the expansion rate of one part of the universe to be different then some other part?"

Not "expansion" but "acceleration of expansion".... that's tied to DE. Am not talking about the fundamental expansion of GR.

But acceleration started at one point. It wasn't there before. We don't know why. It could just as well stop some time in the future, or increase even more, or decrease. At this point we don't know what it is or what drives it. But it has the power to make thing very different.

I guess the bottom is line is I think homogeneity principle as far as what we "see" is more of a wish then anything else. We want the Universe to be all the way as is our own visible one. I guess it's natural. I think there is plenty of room for Universe to be more bizzare then anything we can yet imagine :)

None, I suppose. That would be one way to have the multiverses from inflation that Ethan was talking about in an earlier thread.

But that would also be why it wouldn't be different for long in any usable universe. It would cause the sections going at a different expansion to eventually disassociate with the rest of causality.

I.e. bud off into another universe. Which gets you back into the universe having one value for expansion.

"Now maybe we disagree on the meaning of “homogeneity”. If by that you only mean that the laws are same everywhere, then ok. "

Well it is the only way I can see for the claim you have a problem with to be problematical.

Only a different set of laws would make our bit of it we've looked at unrepresentative.

Remember, the figures in astronomy have a tendency to have errors of a similar order of magnitude in them. Distances to the stars were changed significantly with HIPARCHOS giving a better standard candle measurement.

It makes astrophysics a bit of a bugger if you're interested in the detail, to be honest.

James:

With a P value of 1/80Million as you’ve quoted, then providing this value has been obtained in a meaningful way, then I agree with you that this needs explanation. For a P this small, its watering down due to implicitly searching for hundreds of other oddities as well is still not enough to explain it away.

I’m having trouble finding the 1/80Million reference though. I’ve found a reference of Copi, Huterer, et al (2010) at http://arxiv.org/abs/1004.5602 but they seem to talk of P values in the range 95% up to 99.9% - noticeable but not really big enough to be conclusive, all things considered.

Once a significant P is established the first thing to do before throwing out the Copernican Principle or current Big Bang models is to reconsider methodological errors. Copi has investigated this in some detail over various papers and it’s clear that there are concerns here, such as in subtracting effects from our own galaxy: “Let us assume that the various methods that have been developed to get rid of the Galactic foreground in single frequency maps of the microwave sky are reliable (though we argue below that this might not be the case)” in the abovementioned arXiv paper. The ultimate authority, Wikipedia :), at http://en.wikipedia.org/wiki/Cosmic_microwave_background_radiation#Low_… sums it up with “...the quadrupole prediction of Lambda-CDM cosmology is consistent with the data at the 10% level and that the observed octupole is not remarkable”. There’s a way to go yet before we should start re-thinking theory.

One other general point that I think may have been missed in these discussions. If data seems to be indicating a remarkable conclusion, then there’s a key distinction to make. Does it contradict previous ideas that have always seemed to hold true or does it contradict previous hard evidence? For example, the Copernican Principle (as far as I know) is an example of the former. I’d imagine that the currently accepted Big Bang models are examples of the latter. If the latter applies then you can’t really ditch the established ideas as they have their own P values fighting back. Ethan you summed up that idea very nicely in a previous post where you wouldn’t have a bar of faster than light neutrinos.

Thanx for the input :)

Petter Haggholm:

To expand on Cyan's expansion, we find quantities such as temperature and pressure to be physically important to the (macroscopic) behavior of a system. Average values for those quantities have a large number of microstates associated with them; wierd values for those things (e.g. every particle pushing only on one side of the box; which means all kinetic energy vectors aligned) have many orders of magnitude fewer microstates.

You can, if you like, dispense with the word "disordered" altogether, as long as you understand this. I've heard at least one physicist opine that talking about disorder can send young students down the wrong path, since we naturally think of the terms ordered and disordered as applying to objects in time or space, while what entropy is talking about is distribution of energy among available energy states.

@Ray Hinde:

The reference for the 1 in 80,000,000 probability for the combined alignments can be found here:

http://arxiv.org/pdf/astro-ph/0605135v2.pdf

Relevant excerpt:

"As they are all independent and all involve primarily the quadrupole and octopole, they represent a ∼ 10−8 probability chance “fluke” in the two largest scale modes. To quote [7]: We find it hard to believe that these correlations are just statistical fluctuations around standard in-flationary cosmology’s prediction of statistically isotropic Gaussian random alm [with a nearly scale-free primordial spectrum]."

The Wikipedia citation is addressed here:

http://arxiv.org/pdf/1004.5602v2.pdf

Relevant excerpt:

"... though the I(ntegrated) S(achs)W(olfe Effect) may lead to alignment of the quadrupole and octopole it is not an explanation for the observed Solar system alignments. This alignment would need to be an additional statistical fluke. Finally, this explanation does nothing whatsoever to mitigate the lack of large scale angular correlation because the ISW effect acts as an additive component and should be statisti- cally uncorrelated from the primordial CMB. Therefore, even if the ISW reconstruction is taken as reliable, this argument would imply;

1. an accidental downward fluctuation of the SW sufficient for the ISW of local structure to dominate and cause an alignment, and

2. an accidental cancellation in angular correlation between the SW and ISW temperature patterns.

Neither the WMAP team nor Francis and Peacock estimate the likelihood of these two newly created puzzles.

Regarding the second major issue — the lack of angular correlation — the WMAP team refers to a recent work by Efstathiou, Ma and Hanson [35] who argue that quadratic estimators are better estimates of the full sky from cut-sky data and are in better agreement with the concordance model. While these estimators have been shown to be optimal under the assumption of statistical isotropy, it is unclear why they should be employed when this assumption is to be tested."

The Universe is not Copernican on its largest scales, Ray, and any attempt to render it Copernican via CMB observations falsifies the first CMB peak which is advanced as the most powerful argument for inflation.

The problem is very real, and it is the opposite of the scientific method to refer to those who recognize it as "science denying scoundrels".

We have a real problem on our hands, which is that Ethan and his handlers intend to impose a religion instead of a method.

The method involves falsification.

The religion involves despicable religious dogma intended to uphold a Copernican Principle (a metaphysical, not a scientific, principle) no matter what.

Rick Delano is absolutely correct, Copernicus is the counter-evidential god of dogmatic scientists and this cross-disciplinary problem is so widespread among scientists that they are not even aware of it. This is because they *think* via the worldview of their pre-decided left-winged cosmology and it is the exact disease that Brandon Carter first pointed to in Krakow 40 years ago and it is well documented that I've been saying it for at least 15 if not 20 years.

All the while Brandon Carter has backtracked on the implications of his one self-honest observation for one reason only, dogma.

These people find god faster than creationists when none is called for when there is a strong observational implication in the physics, so they immediately take the opposite ideological position, rather than to look for some good physical reason why physics that applies equally to every planet in every galaxy that evolved at about the same time that ours did might simply be specially ***necessary*** to some physical process. Like an energy conservation law that ***requires*** life as a necessary function of the thermodynamic process, for example only.

Scientists can't get there from here, no matter how much evidence there is thanks to their religion, "Coperncianism", and I assert that this and only this is the reason why we have no true anthropic cosmological principle that resolves the cc problem... as well as "others".

I further assert that this is the reason why we have no ToE and never will as long as nothing changes between the ideological right and the left.

That is just PATHETIC!!!

http://anthropiccosmologicaprinciple.wordpress.com/article/the-anthropi…

OK Rick and Rick...

take two valiums and call us in the morning... jeeeez... you people have a serious issues when you come here and use the comment section as a political platform.

"Scientists can’t get there from here"

Is there a "here"? Just because you think this doesn't make it so.

And, no, science isn't a religion. Sorry.

Quantum mechanics is caused by a software bug in "somebodies" simulation of a universe...

No, no, Peter. QM isn't a bug, it's a feature. See, rather than spending all the compute power to integrate the motion of particles over each time step of simulation in a deterministic fashion, instead they'd just let the value float until it was measured via interaction with something else and then generate a random number for the result. Quite elegant when you think about it.

@Rick DeLano

@Rick Ryals

Thanks Rick D for giving me details of those references. My lack of acknowledgement has been due to a combination of our Internet connection going down and myself now being on a vacation.

I came into this discussion knowing nothing about the axis of evil controversy so I only brought into it my prior convictions about the general nature of the universe. I' m now interested enough in your viewpoint to want to resolve this issue to my own level of comfort. As this thread is probably close to its use by date I'm not going to pursue it right away.

I still agree that a 10^-8 level needs resolution but the arguments haven't convinced me and I can tell you why. I'm not doing this because my opinion's important but because I think it explains how others might think.

One of two things would convince me: spending a lot of time to understanding the complexities of the analysis and then being convinced myself; or seeing the consensus of the "predecided left winged cosmolog(ists)" changing. Otherwise I will favour the least extraordinary explanation which to me is that there is a technical problem either in the mathematical methodology or in the statistical assumption.

Coming from a geocentric ideology in the first place makes accepting the result the most reasonable thing to do. In fact, the result clearly supports such previous beliefs. I can also see that my position and that of the scientific community would seem churlish, but it isn't really.

You've interested me enough to look into this more at some point. Thanks and thanks Ethan.

The funny thing about some scientists, is that given 100 billion years, they can explain anything; and remarkably, their luck never runs out.