Regular readers probably know that I'm into more than just science, skepticism, and promoting science-based medicine (SBM). (If they're regular readers of my other, not-so-super-secret other project, they might also realize that they've seen this post before elsewhere. I had to stay out late for a work-related event and decided to tart it up and recycle. So sue me.) I'm also into science fiction (hence the very name of this blog, not to mention the pseudonym I use), computers, and baseball, not to mention politics (at least more than average). That's why our recent election, coming as it did hot on the heels of the World Series in which my beloved Detroit Tigers, alas, utterly choked and got swept away by the San Francisco Giants, got me to thinking. Actually, it was more than just that. It was also an article that appeared a couple of weeks before the election in the New England Journal of Medicine entitled Moneyball and Medicine, by Christopher J. Phillips, PhD, Jeremy A. Greene, MD, PhD, and Scott H. Podolsky, MD. In it, they compare what they call "evidence-based" baseball to "evidence-based medicine," something that is not as far-fetched as one might think.

"Moneyball," as baseball fans know, refers to a book by Michael Lewis entitled Moneyball: The Art of Winning an Unfair Game. Published in 2003, Moneyball is the story of the Oakland Athletics and their manager Billy Beane and how the A's managed to field a competitive team even though the organization was—shall we say?—"revenue challenged" compared to big market teams like the New York Yankees. The central premise of the book was that that the collective wisdom of baseball leaders, such as managers, coaches, scouts, owners, and general managers, was flawed and too subjective. Using rigorous statistical analysis, the A's front office determined various metrics that were better predictors of offensive success than previously used indicators. For example, conventional wisdom at the time valued stolen bases, runs batted in, and batting average, but the A's determined that on-base percentage and slugging percentage were better predictors, and cheaper to obtain on the free market, to boot. As a result, the 2002 Athletics, with a payroll of $41 million (the third lowest in baseball), were able to compete in the market against teams like the Yankees, which had a payroll of $125 million. The book also discussed the A's farm system and how it determined which players were more likely to develop into solid major league players, as well as the history of sabermetric analysis, a term coined by one of its pioneers Bill James after SABR, the Society for American Baseball Research. Sabermetrics is basically concerned with determining the value of a player or team in current or past seasons and with predicting the value of a player or team in the future.

There are a lot of parallels between moneyball and "evidence-based medicine" (EBM), as you might imagine, as Phillips et al point out:

In both medicine and baseball, advocates of evidence-based approaches argued for the enhanced vision of statistical techniques, which revealed what tradition or habit had obscured. The difference between an all-star and an average hitter, for example, works out to about one hit every other week, a distinction that's almost impossible for even a trained scout to recognize. Statistical power can be as relevant as opposite-field hitting power in the assessment of players. Early proponents of controlled medical trials similarly pointed to how difficult it was for an individual practitioner to determine a treatment's efficacy or distinguish real effects from apparent ones after seeing only a small number of clinical cases. Mathematical measurements and calculations were meant to push practitioners away from naive visual biases — a player who “looks right” or a therapy that seems to work. Walks are far more important than they first appear in baseball; walking is more important than it first appears in medicine.

Moneyball has also entered politics in a big way over the election cycles of 2008, 2010, 2012. In the run-up to the 2012 election, I, like many others, became hooked on FiveThirtyEight, a blog devoted to applying rigorous statistical analysis to the polls. (FiveThirtyEight refers to the number of votes in the Electoral College.) As political junkies (and even many casual observers) know, the man responsible for the blog, Nate Silver, got his start as a "moneyball"-style sabermetrics baseball analyst. In 2002, he developed a model to assess and predict a baseball player's performance over time, known as PECOTA, which stands for "Players Empirical Comparison and Optimization Test Algorithm." Silver brought his model to the Baseball Prospectus. Several years later, he was applying his statistical methods to the 2008 election, and the rest is history. Indeed, in this year's election, Silver correctly called all 50 states.

Silver's model basically works by looking at polling data from a wide variety of polls, particularly state-level polls, weighting the data based on how recent it is, sample size, and the pollster's history of accuracy. The polling data are then used to calculate an adjusted polling average subject to trend line adjustment, house effects adjustment, and likely voter adjustment, after which they are adjusted based on various factors, such as a state's Partisan Voting Index, and the like. Ultimately, Silver takes his aggregated poll data and uses them to run simulations that estimate the likelihood of a given outcome. That's how he came up with his famous estimates of how likely various scenarios were. For example, the day before the election Silver was estimating that Mitt Romney only had an 8% chance of winning the Electoral College. Republicans howled. We all know the results of the election and that President Obama won reelection more easily than the conventional wisdom had been predicting. Silver also had an excellent track record predicting the Republican landslide in the 2010 midterm elections. Back then, Democrats howled.

Not surprisingly, there was considerable resistance in baseball to "moneyball" at first. The "old guard" initially didn't like the implication that statistical modeling could judge the value of a player better than scouts, managers, and the front office. Moreover, the statistical predictions made by "moneyball"-inspired sabermetric analysis often clashed with conventional wisdom in baseball. Similarly, this year we were treated to a similar spectacle, where Nate Silver unwittingly became a political flashpoint, as his numbers, which at no point over the last couple of months favored Romney. This led to considerable resistance to the statistically-based methods of not just Nate Silver, but Sam Wang of the Princeton Election Consortium and others as well. The highest Romney's estimated probability of winning the Electoral College according to Silver's model ever got was 39% on October 12. That's actually not bad, but Obama was the favorite in Silver's model and remained the favorite up until the end. So different was Silver's prediction from the conventional wisdom (a super-tight election that would not be called until very late on election night or might even end up with Obama winning the Electoral College but losing the popular vote) and Republican expectations (a Romney win), that on election night when the networks called Ohio for Obama not long after 11 PM, Karl Rove initially refused to accept the outcome, even though Fox News had also called it. Overall, predicting this election ended up being portrayed as a battle between data-driven nerds over ideological political pundits.

Of course, doctors are not baseball managers or ideologically-driven political pundits. Or, at least, so we would like to think. However, we are subject to the same sorts of biases as anyone else, and, unfortunately, many of us put more stock in our impressions than we do in data. Overcoming that tendency is the key challenge physicians face in embracing EBM, much less SBM. It doesn't help that many of us are a bit too enamored of our own ability to analyze observations. As I've pointed out time and time again, personal clinical experience, no matter how much it might be touted by misguided physicians like, for example, Dr. Jay Gordon, who thinks that his own personal observations that lead him to believe that vaccines cause autism trump the weight of multiple epidemiological studies that do not. The same sort of dynamic occurs when it comes to "alternative" medicine (or "complementary and alternative medicine" or "integrative medicine" or whatever CAM proponents like to call it these days). At the individual level, placebo effects, regression to the mean, confirmation bias, observation bias, confusing correlation with causation, and a number of other factors can easily mislead one.

Yet, in medicine there remains resistance to EBM. Indeed, there is even an organization of physicians that explicitly rejects EBM and frequently publishes screeds against it in its major publication. This organization is known as the Association of American Physicians and Surgeons, and I've written about it before. For example, a former president of the AAPS, Lawrence Huntoon, MD, once dismissed EBM, represented by clinical care pathways, systems-based care, and other products of EBM as "medical herdology." Other articles include titles such as Evidence-Based Guidelines: Not Recommended. In it, its author, Dr. Norman Latov, writes:

On its face, evidence-based medicine is just what the doctor ordered. What rational person would argue that medical decisions should not be based on evidence?

Upon closer examination, however, the term is deceptive. Evidence-based guidelines (EBGs) in fact only use evidence from controlled trials, and deny other types of evidence or clinical judgment, thereby distorting the decision process.

Indeed, when you read various critiques of EBM, they are almost inevitably different from our critiques of EBM (namely that EBM doesn't adequately take prior probability into account). There is also a difference between CAM practitioners and apologists and doctors who think they support evidence and science in medicine. For instance, when most doctors criticize EBM, they tend to attack it because they see it as limiting their "clinical judgment" or on the basis that there are things that "EBM can't account for." (See the parallel here between baseball and medicine?) While it is true that there are things that EBM can't account for, that doesn't necessarily mean that personal experience does better. Indeed, when it's tested rigorously, it tends not to. As for CAM practitioners, I tend to liken them to political operatives: Driven by ideology more than science, rather than simply being used to old ways of doing things, like baseball leaders in the age before "moneyball." For instance, take a look at Andrew Weil, who most definitely does not like EBM, going so far as to write:

RCTs have dominated decision making about efficacy in health care for almost 50 years. Many researchers have explored the difficulty of subjecting IM treatment approaches to RCTs. There are some characteristics of IM interventions that make RCTs particularly difficult to carry out, and perhaps even less relevant, than for conventional allopathic medicine. As Fønnebø pointed out, the gap between published studies of integrative approaches on the one hand, and the clinical reports by practitioners on the other hand, may partially result from the fact that placebo-controlled RCTs are designed to evaluate pharmaceutical interventions.

One can almost see Karl Rove railing against Fox News analysts about calling Ohio at 11:15 PM on election night 2012. (And what an amusing sight that was!) Whatever the reasons, be they ideological, a misguided belief in their own "clinical judgment," or just plain cussedness, there are a lot of doctors who don't like EBM, and there are a whole lot of CAM practitioners that don't like SBM, because CAM exploits the blind spot in EBM. But let's get back to the analogy between moneyball and EBM:

Critics of moneyball approaches have nonetheless been quick to emphasize the way in which perspective can be distorted, not enhanced, by statistics. One might overapply concepts such as Bayes' theorem or develop a habit of plugging data into statistical software simply to gain a patina of precision, regardless of appropriateness (tendencies that cause medical practitioners, in Alvan Feinstein's pithy phrase, to be blinded by the “haze of Bayes”).3 Critics have also pointed to what might be termed the “uncertainty principle” of statistical analysis: general data (How well does this player hit against left-handers? How well does this therapy work in myocardial infarction?) often fail to take into account consequential distinctions; but more specific data (How well does this player hit against hard-throwing left-handers on warm Sunday afternoons in late September? How well does this therapy work in right-sided myocardial infarction in postmenopausal women?) can involve too few cases to be broadly useful. Individuals, and individual scenarios, might always be idiosyncratic on some level — a truth perhaps borne out by long-standing efforts to appropriately apply the scientific results of clinical trials to individual patients in the clinic.

Here's where Phillips et al go wrong. As we have pointed out here on this very blog many, many times before, the problem with EBM is not that it overapplies Bayes' theorem. Rather it's that EBM uses frequentist statistics over Bayes', often massively underplaying the importance of prior plausibility, estimates of which Bayesian statistics demands. Indeed, not making Baysian estimates of prior plausibility leads to EBM's blind spot towards CAM, such that statistical noise and bias in trial design can lead to the appearance of efficacy for therapies whose rationale, to be true, would require that much of modern physics and chemistry be not just wrong but massively wrong. In EBM, "plausibility bias" (or, as I like to call it, reality bias) plays only a relatively minor role, and that leads to noise and bias in clinical trials of, in essence, nothing (i.e., homeopathy or reiki) to appear to be "statistically significant" effects.

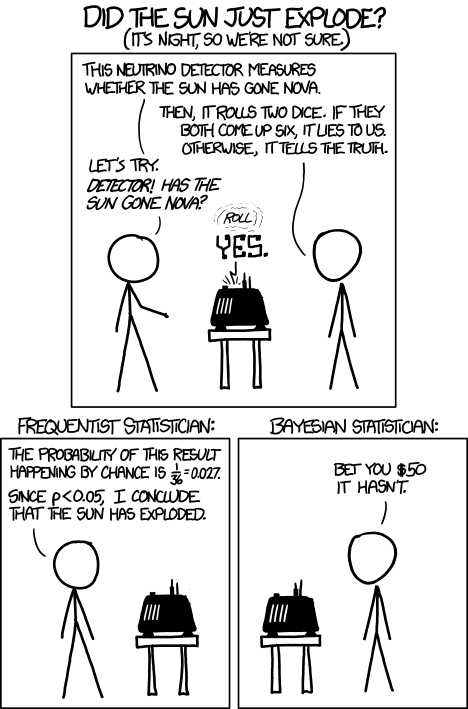

So, while "moneyball" does take Bayes into account somewhat, there is one big difference between moneyball and medicine, and that's science. There are certain things in medicine that can be dismissed as so implausible that for all practical purposes they are impossible based on physical laws and well-established science (i.e., homeopathy). The result was hilariously illustrated in a very simple way in a recent XKCD cartoon that asked if the sun just exploded.

Where Phillips et al do better is in pointing out that EBM has difficulty as the groups under study are chopped finer and finer, with the ultimate goal of developing "personalized medicine," EBM gets harder, as does SBM. It's also where the critics miss the boat as well. CAM practitioners like to invoke this problem as evidence that EBM/SBM can never result in "personalized medicine," but this criticism is disingenuous in the extreme. To CAM practitioners, "personalized medicine" means, in essence, "making it up as you go along," without a scientific basis. In contrast, "personalized medicine" in EBM/SBM can and should mean science-based treatments plus clinical judgment plus patient values. It's just for idiosyncratic cases where clinical judgment is still very important and EBM doesn't banish clinical judgment. Clinical judgment instead becomes the science and art of applying medical and scientific evidence to individual patients who don't fit the mold. They're also correct in pointing out that another area in which moneyball can help in medicine is to increase value. Remember, the very reason moneyball was used in the first place was to help a team at a financial disadvantage compete against teams with a lot more money to burn. On the other hand, in medicine EBM and SBM are not necessarily the cheapest. It's all tradeoffs. For instance, EBM would tell us that for women above a certain risk level for breast cancer it is better to add breast MRIs to their screening regimen, which definitely costs more. What SBM should be able to do is to produce better outcomes.

Finally, one of the biggest impediments to data-driven approaches to almost anything, be it baseball, politics, or medicine, is the perception that such approaches take away the "human touch" or "human judgment." The problem, of course, is that human judgment is often not that reliable, given how we are so prone to cognitive quirks that lead us astray. However, as Philips et al point out, data-driven approaches need not be in conflict with recognizing the importance of contextualized judgment. After all, data-driven approaches depend on the assumptions behind the models, and we'll never be able to take judgment out of developing the assumptions that shape the them. What the "moneyball" revolution has shown us, at least in baseball and politics, is that the opinions of experts can no longer be viewed as sacrosanct, given how often they conflict with evidence. The same is likely to be true in medicine.

Somewhat off-topic, but speaking of blind spots for CAM, Nature has a polite take on NCCAM's chelation trial for treating heart disease (I'm not pointing fingers at Nature!). Kimball Atwood, Steve Novella and others' views feature.

http://www.nature.com/news/chelation-therapy-heart-trial-draws-fire-1.1…

While off-topic, I figure some here will want to read this.

OT but not really OT:

Today Jake @ AoA writes about his critic, Dr DG, who wears glasses.

( very nice ones -btw-)

Fundamentally, I think the resistance to data-informed approaches to analysis & decision-making, whether it be in baseball team management, political prognostication, and medical practice, follows from the assumption that humans' intuitive thinking & decision-making is superior.

This assumption turns out to be false, both from first principles (given the inherent psychological biases & heuristics with which we are all endowed) and from the empirical evidence.

Apart from the points made by Orac above, there is the famous case of the triumph of data-informed analysis & decision-making via British Operations Research during the Second World War.

@ DW, oh aren't little Jake's mental musings, well, amusing? Such a pity his useless arse is wasting space in an MPH programme and not a whit of epidemiology chops to show for it. I can only hope the college received a healthy endowment from his parents to get him in the door and will use it a bit more wisely in the future. Although if that's the case, there are undoubtedly ludicrous caveats attached to it.

Oh and our friend Jen wants to verify some frothy driveling from another unhinged AoA commentor.

@ DW and SM

umm.....ADDS, for alabama, a single state's data on prevalence; isn't there 51 states in the US? How that compare to a bayesian analysis of epidemiological studies of autism...

Alain

We haven't decided whether we really want Puerto Rico yet. We'll see what happens in the performance section of the contest. DANCE, PUERTO RICO, DANCE!

With respect to the 2010 elections, when Nate Silver predicted a Republican landslide, you say that the Democrats howled. I am interested in which Democrats howled, and whether they said that Silver was making things up.

http://www.samefacts.com/2010/10/democrats-in-congress/tin-cup-time-mic… is one post I found from a very liberal-leaning website in which Silver’s predictions are duly noted and are accepted as valid, with a note concerning the Colorado senate race which turned out to be correct. One counterexample does not refute the assertion that the Democrats howled in the face of an inconvenient truth, but it does create reasons for doubt.

It is widely assumed that both parties are the same when it comes to dealing with reality; is this in fact the case? Were the attacks on Silver equally strident and were the denials of his evidence-based analyses equally obtuse?

Responsiveness to reality may be unequally distributed across party lines, easy though it is to say that both sides are the same.

Dude, this is Jake Crosby we're talking about here. Don't try to apply logic to his thought processes.

Anyone interested in a standalone Windows program that will preview your comments before you post them?

Here's one I prepared earlier. Simple, free, and should run as is on most PCs. Older PCs may require .NET Framework installation.

Dude, this is Jake Crosby we’re talking about here. Don’t try to apply logic to his thought processes.

Sigh, I guess we have our strength in logic, it's just the prior probability who differ...

Alain

Forgot to add, our prior probability differ by a crater the size of the grand canyon :D

Alain

@Krebiozen,

Yes, I am. Thanks. This is my first message where I attempt to use the new preview editor.

Nifty. Does it support super/subscripts as well?

@ Krebiozen,

I could install monodevelop on my system to port your application to Linux.

Alain

Mostly off-topic, but only "mostly."

One of my co-workers is also a Tiger's fan, Orac. We only ribbed him a little bit - in our San Francisco office about 2 blocks from the ball park...

Though more on topic, why does the CAM argument seem to come down to: "You don't have enough data. Therefore we prefer to pull decisions out of our butts."

M.O'B.,

It should do, but it uses the Visual Basic webbrowser so I'm not sure they will be the same as Wordpress. That's why I didn't include them in the guide. Let's see:

This works as superscript on the previewer

And this works as subscript

Fingers crossed!

M.O'B.,

Humph. No it doesn't, and there's no easy way to make it do so. Sorry about that.

@Krebiozen,

It's an achievement regardless. Thanks.

The sports thing reminds me of an online game I used to play a long time ago called Silencer. Lot of newbies would load up on weapons and try to fight their way to victory. I was on a team called the SpeeDemons because running secrets to your base is what the game is how you win. Most weapons were pointless because your target is likely going to be out of range before you do significant damage. The newbies were looking for advantages in the wrong place.

On the science angle, good job of covering the issue of SBM vs. EBM once again. Prior plausibility is how we prevent self-deception from outliers and flukes.

I have a couple of on-topic thoughts,

Firstly, the use of statistics in sport and medicine reminds me of Durkheim's social facts, in the way statistics can let us see things that are otherwise invisible. The best known example of a social fact is that there are far fewer reported suicides in Catholic countries than in Protestant ones, for various reasons.

Secondly, I don't think enough attention is paid to effect sizes in clinical trials, in other words clinical efficacy. So many of the results we see being paraded as evidence of acupuncture, homeopathy etc. are barely statistically significant and not clinically significant at all. What's the use of that?

Mephistopheles O'Brien,

You're welcome. Of course you still have to spot typos before posting, but it does help with the missing tags and similar blunders that I and others frequently suffer from.

I think the resistance to data-informed approaches to analysis & decision-making, whether it be in baseball team management, political prognostication, and medical practice, follows from the assumption that humans’ intuitive thinking & decision-making is superior.

Robyn Dawes and his colleagues showed that psychotherapy works no better than benign neglect; and that basing decisions about job recruitment, parole, and college acceptance on 'intuition' and 'clinical judgement' always gives worse results than resorting to objective criteria.

This has not led to the abandonment of psychotherapy, job-interview panels or parole boards, so evidently the proponents of 'human judgement' have nothing to fear.

Looks like we're stuck with 'clinical judgement' and its analogs, so when people are refused parole because of someone else's intuition, or a business fails because someone on a job-interview panel recognised a kindred spirit, they must understand that they have been sacrificed to the worthy cause of allowing more influential people to go on feeling indispensable.

I see Denice Walter beat me to it.. I have a comment stuck in moderation here, about the Boy Wonder Ace Reporter delusional epidemiologist wannabe's post.

Isn't that sweet...and disingenuous of Jake...to be *calling* the end of thimerosal-induced-autism, based on the "current Alabama ADDM autism prevalence report". Meanwhile, his colleagues at AoA keep harping on an increase of autism prevalence.

Jen a.k.a. jened wants *confirmation* that Orac advised his friends to not vaccinate their children, heh, heh.

3-2-1 cue to Jen/jened for another comment directed at me.

With respect to the 2010 elections, when Nate Silver predicted a Republican landslide, you say that the Democrats howled. I am interested in which Democrats howled, and whether they said that Silver was making things up.

I'm not sure I agree that Orac said that, but I strongly agree that in the last few cycles Democrats have been more reality-based.

Full disclosure - I hate both parties, but hate the Democrats considerably less. Another full disclosure, this is a very subjective comment based on casual impressions of a small sample. But anyway...

Nevertheless, I was fascinated by the fact that more or less literally 100% of right wing comments on Silver's blog predicted a Romney victory. They ranged from sneering rage at Silver as a "leftist" for posting a statistical analysis that mildly favored Obama, to more polite and sometimes deceptive posts.

Now, I might have expected an occasional "Romney is superior but fools will elect Obama type of comment"* (*I do not personally agree that Romney was the superior candidate, and don't mean that as a compliment to Obama, it's just an example.) But no such comment ever came. It was all "Romney is sure to win" all the time.

The "liberal" comments had a tiresomely panicky and hand-wringing tone, but they went with the numbers. "Looks like we're winning" when Obama was up big, "Oh my god we're doomed voter suppression Carl Rove Citizens United we're doooommmedd" type comments when his margin reduced. But the correlation with the numbers was near 100%.

The right wing comments were broken clock - they all always said Romney would win, not matter what the data showed. Their comments provided no information at all (about the election, that is). You could easily gauge how Obama had done in recent polls just by reading "liberal" comments, but the "wingnut" comments didn't vary with the data.

@ lilady:

I keep my finger on the pulse of woo..

but am sure to wash my hands thoroughly afterwards.

I'm trying to decipher what Jake is talking about and can only come to two conclusions:

he takes everything literally and

his writing is a toxic admixture of dismal banality and cocksure self-congratulation. Not sure which is worse.

I studied assigning people to categories based upon their writing and while I would NEVER reveal *what* categories immediately spring to mind, well, I'm sure that you and other regulars can make reasonably decent guesses.

he takes everything literally

Yes and he's not growing out of this which is reinforced by his fans (both on AoA and real life).

his writing is a toxic admixture of dismal banality and cocksure self-congratulation.

The self congratulation, maybe it came with the crowd he's having.

Finally, which category I am? inquiring mind want to know :)

Alain

@ Alain:

Neither: you're an interesting, smart fellow in two languages.

Thanks you very much Denice.

Alain

Harold:

I am not certain I grasped Orac's intent either, but the reality testing does appear to be done with data by one party and by subjective considerations by the other.

There are differences between the parties in their acceptance of scientific data as the best way to estimate climate change as well. No need to elaborate on that.

The Democrats seem to have by and large taken Nate Silver's calculations seriously in 2010, but I am sure there may be counterexamples from some blog site or other. The issue I am challenging Orac on has to do with whether there is evidence to imply that the howls were equal in volume and in the prominence in the party of the howlers. In the absence of same, "the Democrats howled" appears to be impressionistic than evidence-driven.

Symmetry of the parties is also worth scrutinizing with respect to the current Republican response to the embassy attack in Libya. They are calling for hearings into the matter in order to see what the Obama administration did wrong. There were, however, numerous attacks on American consulates and embassies under GOP administrations, documented at:

http://mediamatters.org/research/2012/09/14/krauthammer-whitewashes-bus…

These include some attacks with fatalities:

Karachi 6-15-02 10 killed

Uzbekistan 7-13-04 5 killed

Yemen 9-17-08 10 killed

Beirut 4-18-83 60 killed

Other lethal attacks from the same link included Jidda, Saudi Arabia, 12-6-04, 8 dead and Damascus 9-13-06 with one dead. Attacks without fatalities in Serbia 2008 and Athens 2007 could be noted as well.

In each of those attacks on our embassies during Republican administrations, Democrats saw the incidents as attacks on us, not as scandals by us. Their response was to rally round the flag when the attacks became known.

The two parties appear to be made from different political DNA. This business goes beyond methods of reality testing, and strikes at something much deeper in our society.

@ Denice Walter: About the Boy Wonder....

IMO, he's done some cut and paste quotes (definitely out of context), from Orac's older blogs and from his comments on the SBM blog, regarding vaccines. He really is fixated on Orac. Where the hell did he get that old picture from...a college yearbook?

IIRC, the last time he posted here, herr doktor bimler challenged him about one of his statements (parents of autistic kids wishing them dead). He "just disappeared"...after that. I chased him off a Ho-Po blog about six months ago, when he was touting Kirby's book.

Come on now, Alain. You know you are one of the "RI Regulars", respected for your opinions and your comments.

Come on now, Alain. You know you are one of the “RI Regulars”, respected for your opinions and your comments.

Fair enough but keep in mind I have been diagnosed with a PTSD which occurred because of random peoples (which I didn't knew) labelling me and pointing me by the finger in various university campus here and in the city bus.

This isn't the only cause of my PTSD but for the rest, I'll keep that private.

Now, I do recognize that I shouldn't worry about it here but I am being treated for it (by my p-doc and a cross-over clinical trial) and I still have to make progress and this include, not worrying about it here (such as when I was alerted about a certain Alain posting on another site, I think it was Alain Clouvier, right?).

Alain

I don't think the Democrats so much "howled at" Silver's prediction as ignored them in the sense of the party leadership not changing their GOTV strategy and letting up on the 50-state strategy that had worked so well for them in '06 and '08.

Now I get to wonder, did I scare anyone with my story?

Alain

No Alain and I didn't think you were the other Alain for a second.

This explains so much. As an outsider, the election did indeed look close. I noticed a lot of lack of surprise from Americans, and now I know why! -- Also, fascinating stuff about baseball stats.

This may be an example of generalizing from a sample of one. In 2004, the last-minute polls reported in the media were skewed. Some Republican pundits thought there was a repeat of this at each election ever since.

Ed Whitney: Firedoglake noted well over a year in advance that the Republicans were going to romp in 2010. They did polling in Vic Snyder's CD that seems to have matched up so well with his own internal polling (and was greatly at odds with other polling) that he bailed from his congressional race the day after the FDL poll results were made public. FDL polling similarly predicted a gloomy electoral fate for Ohio's Steve Driehaus. In fact, FDL polling predicted

The response of many pundits was to accuse FDL of meddling in the 2010 election when all it did was to have accurate polling.

Computer crashed, so I need a cookie.