In their wonderful Neuroimage article, Braun & Mattia present a comprehensive introduction to the possible neuronal implementations and cognitive sequelae of a particular dynamical phenomenon: the attractor state. In another excellent paper, just recently out in Frontiers, Itskov, Hansel and Tsodyks describe how such attractor dynamics may be insufficient to support working memory processing unless supplemented by rapid synaptic modification - a mechanism which has in fact been described neuroanatomically and previously utilized neurocomputationally to describe cognitive phenomena. To see how these ideas tie together a number of different neuroanatomical and cognitive discoveries, let's start with the basics of attraction.

Attractors are those patterns in some abstract state space (e.g., a 3 dimensional space as defined by the firing rates of 3 different neurons) towards which a system will naturally converge over time as it loses energy. These can be simple points (say, where despite its initial conditions, our 3-neuron system will always end up with firing rates of 0, 0, and 1 Hz respectively), or lines (where our 3-neuron system might end up with firing rates of 0, 0, and between .3 and .7Hz respectively), rings (where our 3-neuron system might ultimately end somewhere along a path encircling the value .5,.5.,5), or shapes with fractional dimensions (e.g., the classic "Lorenz attractor"). Systems can have multiple attractors of any type; the "energy landscape" of a dynamical system can be plotted as a function of how different initial conditions may ultimately fall into the "basin of attraction" for various attractors. Here's an example of the energy landscape of a multi-point attractor system, where the point attractors are illustrated in red:

But attractor dynamics can be far more complex. As pointed out by Braun & Mattia, neural dynamics may smoothly traverse multiple attractor states if, upon reaching a point of attraction, the energy landscape of the neural population changes (say, as a result of neuronal fatigue in the neurons supporting the pattern of firing that comprises the attractor). As such, neuronal dynamics might be understood as traversing an energy landscape that is itself composed of multiple such landscapes - that is, a kind of attractor dynamic of attractors, or what Braun & Mattia term a "metastable state."

As reviewed by Braun & Mattia, slices of visual, auditory and somatosensory cortex demonstrate spontaneous patterns of firing that are almost identical to those observed following stimulation of the thalamic areas that innervate them. This observation suggests that the cellular architecture of these regions defines a state space that is remarkably metastable, with spontaneous activity reflecting a serial transition through attractors within this state space. Spontaneous activity of this kind gives rise to a kind of "avalanche" dynamic in which synchronous neural firing in superficial cortical layers triggers a chain reaction of avalanches across interconnected cortical sites.

These physiological dynamics, as well as those from the domain of perceptual decision making (and associated signal detection as well as diffusion models of this domain) are well-captured by neural network models that include lateral inhibitory competitive dynamics that support winner-take-all processing, when superimposed on sparse excitation. Diffusion of perceptual information into the system can be understood as the neuronal population being perturbed from its initial low-energy state, and haphazardly navigating the energy landscape of the state space until a basin of attraction is found and the lowest-energy attractor reached.

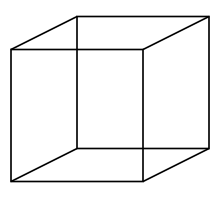

One related perceptual domain is that of bistable perception, classically illustrated by the two depth interpretations that are possible of the necker cube:

But a more fun illustration of this phenomenon is the "dancer" animation. Which way does the dancer turn in your perception? And can you see the dancer turn in the other direction?

(Take a minute or two with that one if you fail to see her reverse; it's a sudden but unpredictable shift). One last one, since these are so much fun:

One can understand the perceptual transitions between these various interpretations as the state space traversal of neuronal populations responsible for depth and motion (respectively in the above two examples) between two different points of attraction, such that the energy landscape is itself dynamic. At a higher level, it could be understood as a kind of nested attractor, where there is a ring attractor that governs transitions between two point attractors.

Interestingly, tri-stable percepts can also be found. Transitions between the three interpretations of these ambiguous stimuli (which we'll call A, B, and C) are temporally interdependent, as would be expected if neuronal fatigue is driving the transition among the various points in state space. Here's an example - you should be able to see motion towards the left, the right, or straight up.

As reported by Naber et al., the shorter a percept has lasted and the longer since it has re-appeared, the more likely it is to re-appear.

(Interestingly, it has been noted that the right ventrolateral prefrontal cortex, the focus of yesterday's excruciating post, tracks the duration of transitional states in these multistable perceptions [as newly reported by Knappen et al], possibly suggestive of a role for the rVLPFC in detecting [but not initiating] shifts in the energy landscape of neuronal state space. Such a role would also be consistent with this area's functionally-interposed membership with the default and task-positive networks).

Braun & Mattie suggest that such nested attractors may also reflect a hierarchical structure of anatomical connectivity, either strictly corticocortical or those that may be more regionally-diverse (e.g., nested cortico-striatal loops).

Particularly relevant to this latter point is a recent computational exploration of the details of such attractor networks, presented by Itskov, Hansel, and Tsodyks. Itskov et al rightly point out that, while appealing in principle, attractor dynamics in what I'll call "runnable" neural network models can require exquisite hand tuning, and are particularly sensitive to noise in connectivity or activation. Although widely hypothesized to be a mechanism for the active maintenance of information over time, the noisy nature of the brain could be taken to imply that frameworks like Braun & Mattia's cannot actually apply to working memory in the physically-realized brain.

However, Itskov et al's elegant computational modeling work demonstrates that, so long as the connectivity is sufficient to support the presence of an attractor in response to a stimulus in the first place, that attractor can be stably maintained even in the absence of this stimulus so long as there is relatively minor and short-term, Hebbian-like synaptic facilitation of the weights of the units participating in the attractor. Without this form of short term weight change, plausible levels of noise in activation and connectivity is enough to so seriously damage the attractors that no delay-period stimulus maintenance is possible.

What's particularly interesting about this solution - in conjunction with the meta-stable nested attractor framework of Braun & Mattia - is that it confirms that attractor dynamics could indeed be a mechanism by which hierarchical frontal and frontostriatal processing occurs. It matches not only with previous computational models (e.g., that of Reynolds et al., who demonstrate that short-term synaptic facilitation in the prefrontal cortex may be important for capturing some important task-switching phenomena) but also with detailed neurophysiological investigations which confirm that, indeed, prefrontal neurons contain more short-term synaptic facilitation effects than observed in posterior sensory cortex. To be clear, I don't think this work settles the debate about whether the short-term facilitation is necessarily weight-based in nature, or whether it might instead be due to some kind of thalamocortical positive feedback loop (indeed, recent data from Freyer et al in the Journal of Neuroscience appear to be suggestive of the latter, with respect to the human alpha rhythm in the resting state, and a related paper implies this phenomenon may be functional interdependent with stimulus-evoked BOLD). Certainly both are present and operative, and this work provides further justification for believing there are important functional consequences to these neuroanatomical features.

Another animation with bistable perception, of a planar figure:

http://markdow.deviantart.com/art/Thue-Morse-in-rabbit-land-49687417

And an animated variation of the Necker cube four stable percepts:

http://markdow.deviantart.com/art/Enigmatic-cubes-106688257