“He who loves practice without theory is like the sailor who boards ship without a rudder and compass and never knows where he may cast.” -Leonardo Da Vinci

One of the great tools that theorists use in their arsenal is the reductio ad absurdum, or the notion that if you can prove that the acceptance of a scientific theory leads to an unacceptable prediction known to conflict with experiment. But there's a catch.

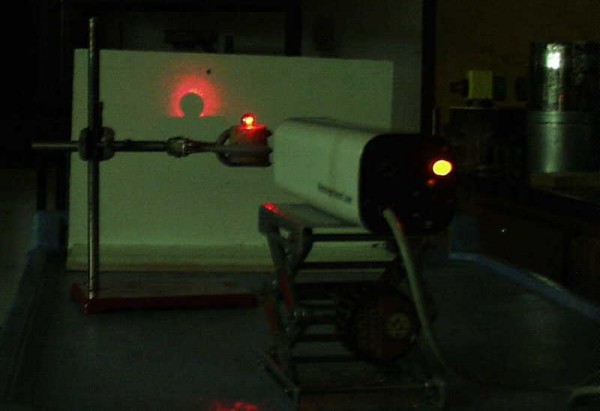

You see, if it's an experiment that hasn't been rigorously performed before, you must actually perform it, no matter how ridiculous the predictions actually seem to us. After all, nature is full of counterintuitive rules, laws and phenomena that we never would have uncovered if we didn't subject it to the ultimate test: scientifically rigorous experiments!

Come learn about the most amazing story of theoretical hubris -- and experimental vindication -- in all of physics history!

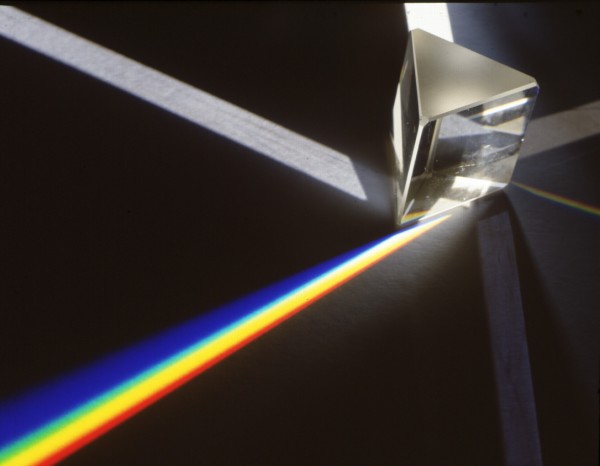

However even Newton had a clue that light is a wave. He observed the so called Newton rings, but he gave up explaining them.

What a cool story! Can you explain more about the intensity chart? I was wondering a few things:

1) Does it actually touch zero at any point, or does everywhere have at least a tiny probability of getting a photon?

2) What are the steps on the curves - and why no steps for the smooth curves either side of the spot?

3) What's the pair of 'squashed wave' anomalies at +/- 2.5?

Hi Ethan,

In the article you say "This sort of phenomenon could not be accounted for with any ray-based (or corpuscular-based) theory of light, but rather required that light fundamentally behaved as a wave."

What about the theory that photons are pushed around by some kind of "pilot wave"? (Not that I believe it).

I think the double slit experiment is very good evidence that we live in a quantum multiverse if not a cosmological multiverse.

I run into this in software. No matter how simple the code, if it isn't tested, it doesn't work. I've seen this over and over.

This sort of reminds me of Bell's Theorem (nee Hypothesis) which was experimentally confirmed by John Clauser (nee right guy in the right place).